Although the datasette is a device that all Commodore retro-computer fans know about, the feelings about this device can be mixed. Mostly because loading from tape can take a long time, although technically that was for very good reasons.

Although the datasette is a device that all Commodore retro-computer fans know about, the feelings about this device can be mixed. Mostly because loading from tape can take a long time, although technically that was for very good reasons.The problems most people remember comes from the use of fastloaders. Fastloaders are nothing more then a protocol that differs from the Commodore kernal loader and where the data transfer speed is favored over reliability. Because of this fastloaders operate near the edge of what is technically possible on an ordinary datasette. Meaning that in many cases the fastloaders failed if the user had a minor problem in the system. The most common problem was the feared head alignment or azimuth problem, since the slightest deviation from perfection reduced the reproduction of the high frequency signals. The standard and slow Commodore kernal tape loader did not really have such these issues as they used lower signal frequencies and a more complex definition of pulses to indicate a single high/low bit. The Commodore kernal tape loader also did not have issues with tape speed, as the 10 second leader on the start of each recording allowed the system to measure and compensate for possible tape speed related timing errors. But fastloaders didn't always have that functionality, meaning that tape speed differences between saving and playback could be a problem under certain conditions.

I never had a tape speed problem...

Many Commodore users will tell you that the tape speed isn't such a big problem in real life. Because, hey, it works, doesn't it? But in most cases, how often do they really use tapes written in another datasette? And if a tape doesn't work, how would they know that it was to be related to an incorrect recording and playback speed? Because there are most likely many other problems that are much worse, head aligmnent, damaged tapes, faulty belts, dirty heads and rollers.

Back in the day, programmers who made a master tape on their datasette and then send it of to a tape production facility, did not use a 40 year old datasette. Their machine was most likely still in perfect working order and the speed calibration was most likely still within factory specifications. But if we would make a master tape today then we do use a 40 year old datasette, shouldn't we at least check the tape speed? After all, after 40 years anything could have happened. Most users already clean the heads, replace the belts, adjust the azimuth of the tape head, but how many actually check the speed calibration. There isn't much info about that. And most likely because the tools you need are not to be found in the toolbox of the common retro computer collector.

But how bad is it, a few percent error, who cares? Assume that you datasette runs 5% too fast, you record a tape, rewind it and play it back on your own machine, you play it back, then everything is perfect, since you are playing it back with the same speed error meaning that the signals being played back are identical to the ones that were recorded. But... if you would play that tape back in another datasette, one that runs 5% too slow, then "suddenly" there is a total speed error of 10%. That is a lot!

So, if you release your latest game recorded on your own datasette, shouldn't you make sure to record the tape at the proper speed? Because although you cannot control the speed of datasette that is used for playback, you can control the device used for recording and therefore reduce the possible playback speed error.

How does it work?

In order to measure something, there has to be a reference. Therefore tape speed measurement cannot be done easily without the use of the reference tape. Although the video shows that there are many methods to achieve the same goal, they aren't very accurate or practical to say the least. The most practical is by inserting a reference tape with a known signal and play that in the machine that needs to be tested. The reference tape I used, had a reference signal of 3150Hz which is not uncommon in the audio world. The fact that this is a stereo recording does not matter, although the 1530 and 1531 are mono devices, the layout of the tracks on the tape allow for stereo recordings to be played back in mono equipment.

In order to measure something, there has to be a reference. Therefore tape speed measurement cannot be done easily without the use of the reference tape. Although the video shows that there are many methods to achieve the same goal, they aren't very accurate or practical to say the least. The most practical is by inserting a reference tape with a known signal and play that in the machine that needs to be tested. The reference tape I used, had a reference signal of 3150Hz which is not uncommon in the audio world. The fact that this is a stereo recording does not matter, although the 1530 and 1531 are mono devices, the layout of the tracks on the tape allow for stereo recordings to be played back in mono equipment.

If the signal coming from the machine deviates (frequency is too high) then it means that the tape is played back too fast. If the signal coming from the machine deviates (frequency is too low) then it means that the tape is played back too slow. Normally the signal frequency is measured by the use of a frequency counter in the form of a dedicated tool (frequency counter) or oscilloscope or multi meter with frequency measurement functions.

However, the average retro computer collector does not have access to such equipment. Now the reference tape itself is a thing that you cannot do without, there is no easy substitute for that, but the special hardware (like oscilloscopes or meters) is a thing you do without. Because the computer connected (Commodore 64) to the datasette has already everything it needs to act like a frequency counter. All we need is a piece of software. Which I wrote especially for this occasion.

However, the average retro computer collector does not have access to such equipment. Now the reference tape itself is a thing that you cannot do without, there is no easy substitute for that, but the special hardware (like oscilloscopes or meters) is a thing you do without. Because the computer connected (Commodore 64) to the datasette has already everything it needs to act like a frequency counter. All we need is a piece of software. Which I wrote especially for this occasion.

How does the software work

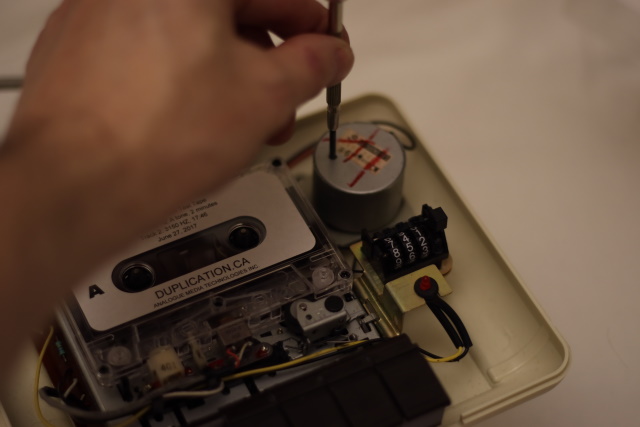

The software, running on the Commodore 64, uses the CIA timers to measure a time of exactly 1 second. During this second all pulses from the tape are counted. Because the time of 1 second is relatively long, all wow and flutter variations are averaged out and therefore have no significant influence on the frequency measurement. And because the definition of frequency is the number of pulses per second, the counted value is also the frequency value. You cannot get more basic than that. The speed of one measurement per second seems slow to some, but is more that adequate for this purpose, since the method of adjusting the potentiometer in the motor itself isn't a very speedy process in itself.

Now there is one important thing to know when using the CIA timers inside the C64 and that is that their accuracy depends on the clock frequency of the C64. Now although this frequency is very accurate and is used to generate the video signal of the computer itself. It also means that since there are two different video systems (PAL and NTSC) that there are two possible different clock frequencies of the C64. By determining the type of video signal that the computer generates, we can determine what the clock frequency of the system must be. And with that knowledge we can configure the registers of the CIA timers appropriately in order to generate a measurement window of exactly 1 second.

It is important to know that this concept of measurement only can work properly if a good quality reference tape is used. Since basically we are calibrating our machine to the machine that the tape was made on. I bought my reference tape from a real tape manufacturer. Do not be tempted to search for reference tapes on eBay or alike. Since these tapes can be very well be made in equipment of dubious quality and a copy of a reference tape isn't a reference tape! Some people might suggest to use the 10 second leader of an original (not a copy) Commodore tape game. And although the 10 second leader sounds usable and the frequency of that leader is very well determined, 10 seconds is very short, so you will be constantly rewinding the tape, to play the same 10 seconds over and over again, but the main issue here is that the tape mechanism requires a few seconds to stabilize. After all, you cannot expect a system to go from a full stop to perfect speed in an instance, there are all sorts of small oscillations that need to smooth out and playing back the same piece of tape over and over again might lead to wear and stretching of the tape which may lead to an inaccurate measurement. But technically or to be more precise "in theory" it is not impossible. Practically, you'd be better off playing the same song at the same time on a CD-player and your datasette and try to keep them playing in perfect sync. Although finding exactly the same recording on two different kind of media, is a bit of a challenge. And doing the calibration requires a lot of patience and concentration.

It is important to know that this concept of measurement only can work properly if a good quality reference tape is used. Since basically we are calibrating our machine to the machine that the tape was made on. I bought my reference tape from a real tape manufacturer. Do not be tempted to search for reference tapes on eBay or alike. Since these tapes can be very well be made in equipment of dubious quality and a copy of a reference tape isn't a reference tape! Some people might suggest to use the 10 second leader of an original (not a copy) Commodore tape game. And although the 10 second leader sounds usable and the frequency of that leader is very well determined, 10 seconds is very short, so you will be constantly rewinding the tape, to play the same 10 seconds over and over again, but the main issue here is that the tape mechanism requires a few seconds to stabilize. After all, you cannot expect a system to go from a full stop to perfect speed in an instance, there are all sorts of small oscillations that need to smooth out and playing back the same piece of tape over and over again might lead to wear and stretching of the tape which may lead to an inaccurate measurement. But technically or to be more precise "in theory" it is not impossible. Practically, you'd be better off playing the same song at the same time on a CD-player and your datasette and try to keep them playing in perfect sync. Although finding exactly the same recording on two different kind of media, is a bit of a challenge. And doing the calibration requires a lot of patience and concentration.

Advantage of a software solution

Using software on the C64 instead of using a frequency-counter/multi-meter/oscilloscope is that you do not need another expensive tool. The software is free (a reference tape is needed anyway). But the main advantage is that you do not need to open the datasette in order to do a quick check if it is running at the proper speed. Just insert the tape, and run the program to read the value. No screwdriver, no hassle if you just want to do a quick check of the speed setting.

Software side note

Despite on reading the datasheet from front to back, visiting various websites (but most likely not the right ones), there is one tiny little problem I was not able to solve properly, but I do was able to work around it. The software sets the CIA timer to trigger after exactly 1 second, which rises a flag that is detected by the software upon which the counted value is printed to the screen and the whole cycle repeats. However, sometimes the timer event is missed (not generated? lost in time and space?) and the counting continues way outside the 1 second window. Now since this is not correct, the value is not to be used. Since I could not detect the true origin of this problem I created a sort of watchdog counter in the main loop, so that if I fail to detect the 1-second timer event, the watchdog will wake and the measurement is discarded and a new measurement is initiated. This happens rarely and functions so smoothly that you'll never even notice it if it should happen. But for debugging purposes I print a single debug-character on the top-left of the screen, if you'll see a character appear/change you'll know the watchdog triggered. Now this does not affect the accuracy of the measurement at all, since incorrect measurements are never shown, but from a programming perspective I would like to understand what happens and how it could be solved properly. MOS (Commodore) has a history of releasing chips with bugs in it, one of the bugs was the reason that Commodore switched the IEC serial port from hardware to bit-banging on the very last moment before production, so I would not be surprised if the CIA timer has a similar problem. Although at this moment I expect it to be a problem I created myself, I just don't see it yet. If you have any tips, please let me know.

Downloads:

I've written this program using CBM Program Studio, below are the executable and the sourcecode.

The .PRG file : TapeFreqCounter_v1.0.prg

The sourcecode : Sourcecode_v1.0.zip